A governance setup for the use of data consists of a range of elements that balance the analyst's local agility in developing new or modified analyses and reports with the company's need to ensure shared data - a common truth.

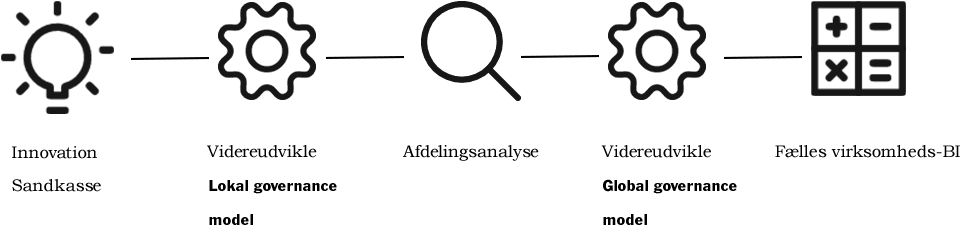

Many new or developed reports or analyses begin in a sandbox environment as a test of an idea, and if it provides the insights that the analyst expected - perhaps after a number of iterations - it can be elevated to, for example, a region-specific report or a common report for the entire company, which is distributed through a common BI platform.

The characteristic of a sandbox analysis is that it is typically quick to make, does not require many resources, and may have a short lifetime. For example, it could be a test of potential data correlations. If the analysis is elevated to a regional or department-specific analysis, the requirements for ensuring its robustness increase. This means that operations and maintenance, support, and monitoring need to be established, which increases delivery time and resource requirements.

When an analysis is further developed, quality can be ensured by creating a procedure where a colleague or someone from the BI team reviews the analysis before it becomes a regional or departmental report.

When it comes to the company's common reports, the requirements increase once again. Here, the quality is expected to be top-notch so that reports and analyses can be delivered to the management or external authorities, which also increases resource requirements and the time from request to final delivery.

This approach to a report or analysis can also be used to describe its lifecycle: starting as an idea or hypothesis, transitioning to a department using it as part of its work, and finally, it can be elevated to provide value for the entire company.

Maintain relevance

A final element of the lifecycle of an analysis or report may be that the specific analysis becomes less relevant and is used less and less because it has been replaced by others. Generally, less use of a given analysis may be due to a changed business focus or, in some cases, because the logic is no longer sufficient, and users therefore combine data from this analysis with other data.

As the BI solution should always maintain its relevance, it is generally recommended to monitor the use of the reports and dashboards delivered to the company. If there is a decline in usage, it may be a sign of the need for corrections or actual phasing out if they are no longer used, ensuring that only relevant reports are maintained.

Visibility around approval level

Another important aspect of a report governance process is to ensure that everyone who uses the reports can see their approval level. Marking a report as approved for company use - a watermark - or indicating that the owner of the report is a department analyst who can be contacted if there are any questions about a departmental report, is one way to ensure that all users understand the premise for the use of a given report. Finally, it is also good practice to ensure that all reports state their purpose, definitions and calculations used, and when the data was last updated.

By describing and working with this maturation of data, so that valuable analyses made in the sandbox can be moved into the company's common solution, you get the best of both approaches and efficient data management. More efficient because you are not doing manual analysis and where it is better to automate reporting.

Find out how we can help with Business Intelligence (BI) and Microsoft Power BI. We also offer courses in Power BI.